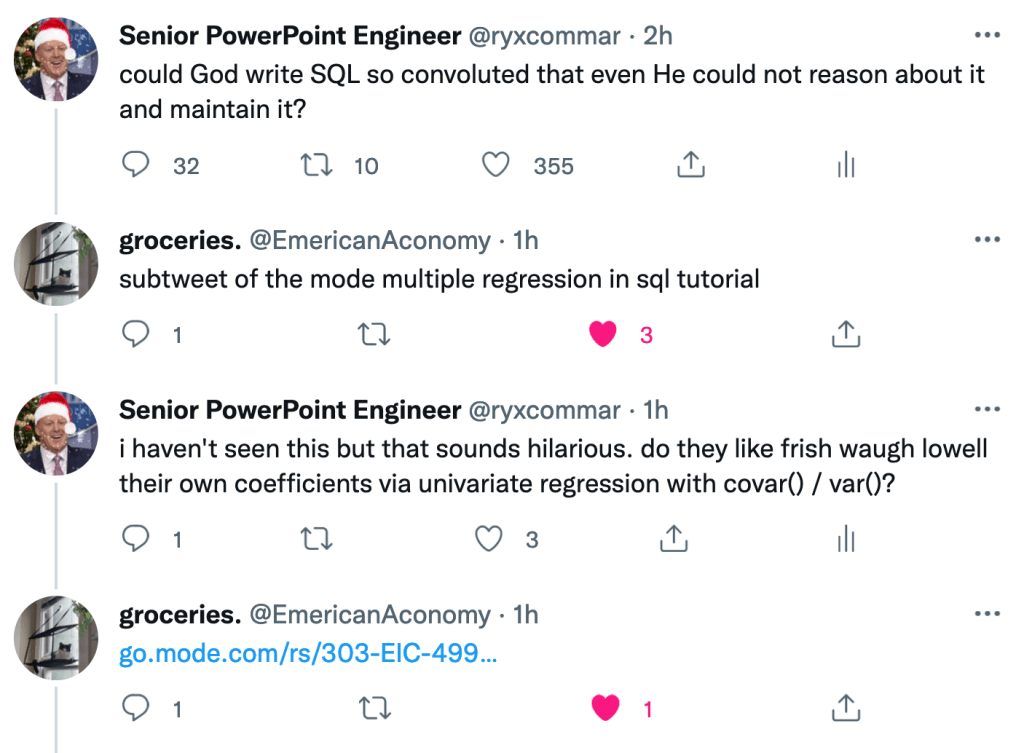

This post is inspired by someone dropping this in my mentions today:

The technique the authors use is cute, but it’s not a true arbitrary multivariate regression. They cheat a little bit using dummy variables for the majority of their coefficients. I respect it, but it’s not an arbitrary regression.

Fortunately, it is possible to do true multivariate regression using a real-valued design matrix. This post covers how and provides code that can fully replicate what I did.

Setup

Install dependencies:

pip install statsmodels pandas numpy duckdb

Let’s generate some data and setup a DuckDB connection:

import statsmodels.api as sm

import pandas as pd

import duckdb

import numpy as np

N = 50_000

df = pd.DataFrame(index=range(N))

df.index.name = "idx"

np.random.seed(42069)

df["const"] = 1

df["x1"] = np.random.normal(2, 4, size=N)

df["x2"] = np.random.normal(3, 2, size=N) + df["x1"] * np.random.normal(-1, 1, size=N)

df["x3"] = np.random.normal(2, 2, size=N) + df["x2"] * np.random.normal(-1, 1, size=N)

df["y"] = 2 + 3 * df["x1"] + 5 * df["x2"] - df["x3"] + np.random.normal(0, 3, size=N)

df.to_csv("fwl_generated_data.csv")

Univariate Regression

Let’s first start with a univariate regression:

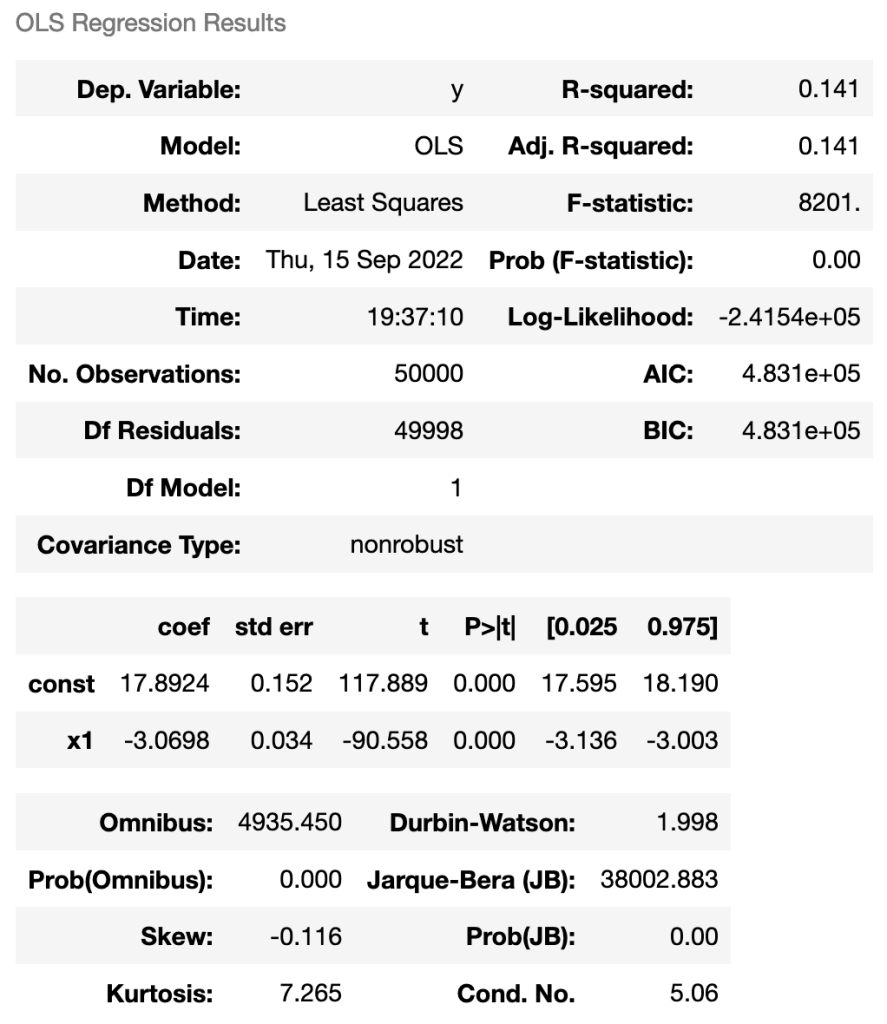

# Multivariate

sm.OLS(endog=df["y"], exog=df[["const", "x1"]]).fit().summary()

Output:

In a univariate regression, the coefficient is equal to the covariance of the centered y variable and centered x variable, divided by the variance of the centered x variable. We can cheat a bit and use the fact that both the numerator and denominator will be divided by the sample size. This gives us our “x” coefficient:

sum(y_centered * x1_centered) / sum(x1_centered * x1_centered)

Getting the constant term is basically a matter of walking backwards our process of centering the data. First, we add back in the mean of “y” we subtracted out originally. But that’s not enough– we also centered “x.” How can we walk that back? Just subtract out the mean of x times the coefficient we just calculated. This gives us our constant term:

avg(y) - avg(x1) * sum(y_centered * x1_centered) / sum(x1_centered * x1_centered) as const_coef

Let’s use that CSV we generated to run DuckDB and calculate the coefficients there:

univariate_regression_query = """

with base as (

select

y,

y - avg(y) over () as y_centered,

x1,

x1 - avg(x1) over () as x1_centered

from fwl_generated_data.csv

),

regress as (

select

avg(y) - avg(x1) * sum(y_centered * x1_centered) / sum(x1_centered * x1_centered) as const_coef,

sum(y_centered * x1_centered) / sum(x1_centered * x1_centered) as x1_coef

from base

)

select *

from regress

"""

con.execute(univariate_regression_query).df()

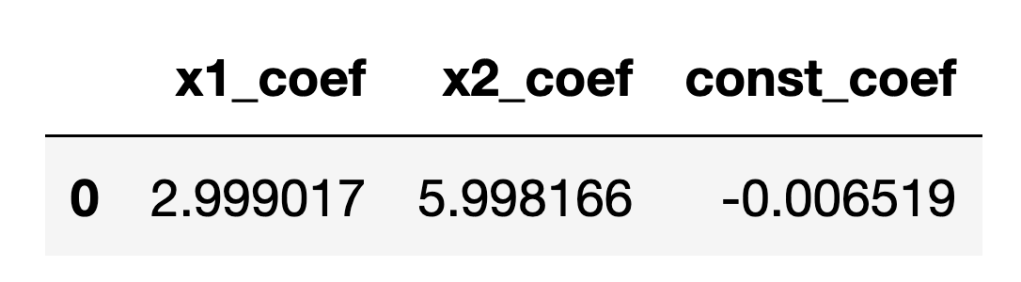

And our output is this:

Nice, we got a match!

Regression With 2 Predictors

The trickery behind what we will be using to generate multiple regression coefficients is the Frisch-Waugh-Lovell theorem (“FWL”). I have covered this theorem in a previous post here.

Long story short, we will be using the residuals of univariate regressions of x1 on x2 and vice-versa to construct new univariate regressions on y. Because we already know how to do univariate regression in SQL from the previous section, doing multiple regression is just a matter of stringing these pieces together.

Visually, constructing a single multiple regression coefficient using FWL looks like this:

Doing that twice gives us both coefficients.

(As you can tell from the animation, “residualizing” the “y” variable is not actually necessary for calculating the coefficients. We only need to “residualize” the regressors.)

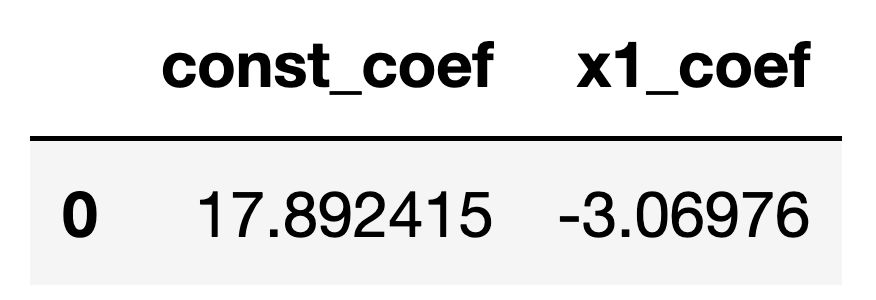

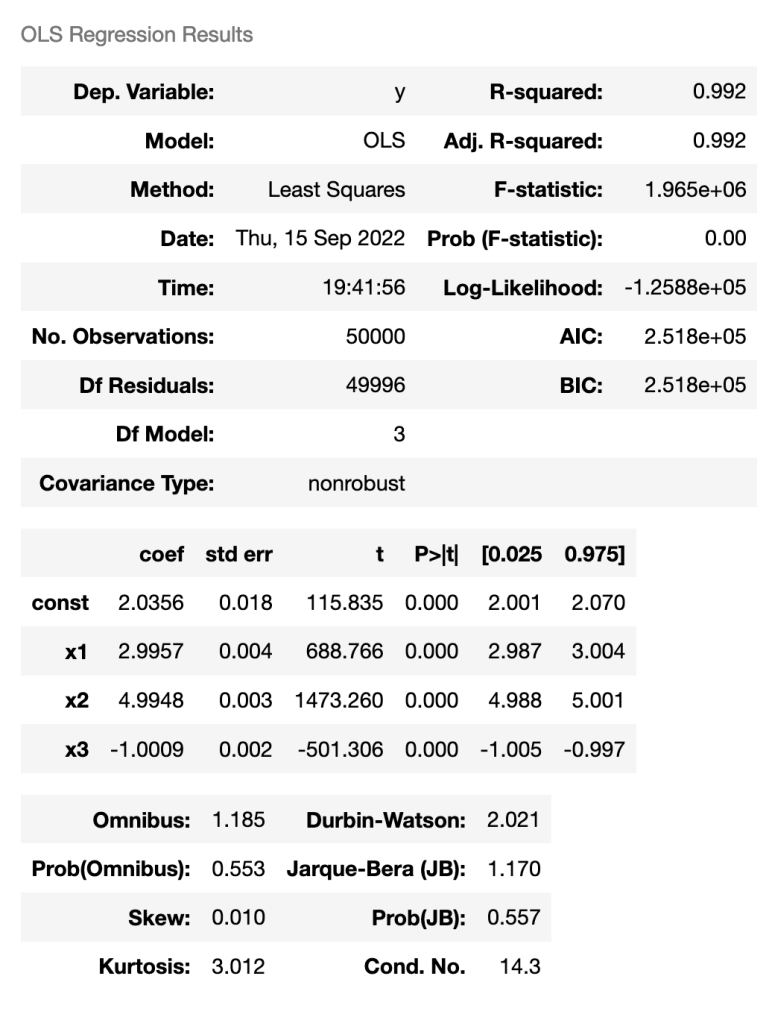

Here is the regression done in Statsmodels:

Here is the full code:

multiple_regression_query = """

with base as (

select

idx,

y,

y - avg(y) over () as y_centered,

x1,

x1 - avg(x1) over () as x1_centered,

x2,

x2 - avg(x2) over () as x2_centered

from fwl_generated_data.csv

),

univariate_regress as (

select

avg(x2) - avg(x1) * sum(x2_centered * x1_centered) / sum(x1_centered * x1_centered) as x1_const_coef,

sum(x2_centered * x1_centered) / sum(x1_centered * x1_centered) as x1_coef,

avg(x1) - avg(x2) * sum(x1_centered * x2_centered) / sum(x2_centered * x2_centered) as x2_const_coef,

sum(x1_centered * x2_centered) / sum(x2_centered * x2_centered) as x2_coef

from base

),

resids as (

select

y,

y_centered,

x1,

x1

- (select x2_coef from univariate_regress) * x2

- (select x2_const_coef from univariate_regress)

as x1_resid,

x2,

x2

- (select x1_coef from univariate_regress) * x1

- (select x1_const_coef from univariate_regress)

as x2_resid

from base

),

multiple_regression as (

select

sum(y_centered * x1_resid) / sum(x1_resid * x1_resid) as x1_coef,

sum(y_centered * x2_resid) / sum(x2_resid * x2_resid) as x2_coef,

avg(y)

- avg(x1) * sum(y_centered * x1_resid) / sum(x1_resid * x1_resid)

- avg(x2) * sum(y_centered * x2_resid) / sum(x2_resid * x2_resid)

as const_coef

from resids

)

select * from multiple_regression

"""

con.execute(multiple_regression_query).df()

The output:

Good God, we’ve done it!

But can we go even further?

Regression With 3 Predictors

You could probably tell that this was coming. That “x3” in the DataFrame we created wasn’t for nothing.

I almost didn’t do this out of laziness, but I figured people would get mad at me if I didn’t.

The compromise is that I will do it, but I won’t explain what is going on. Figuring out why this works is left as an exercise for the reader.

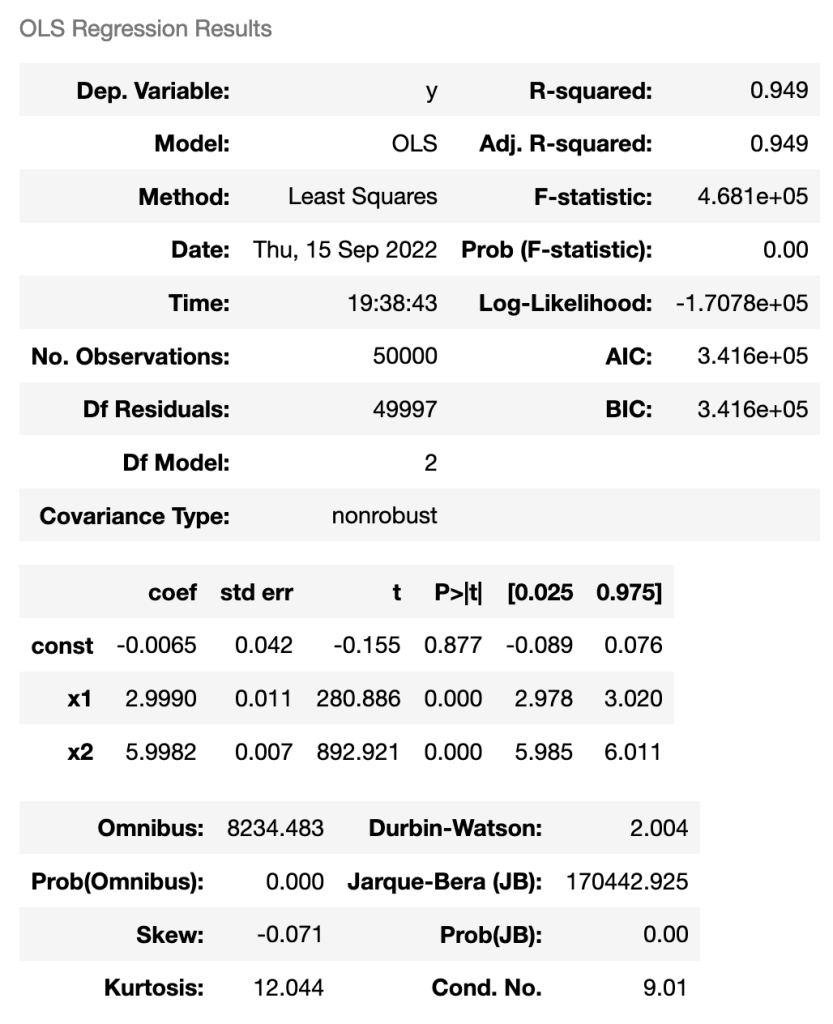

Here’s Statsmodels:

Here’s the SQL embedded in Python:

big_multiple_regression_query = """

with base as (

select

y,

y - avg(y) over () as y_centered,

x1,

x1 - avg(x1) over () as x1_centered,

x2,

x2 - avg(x2) over () as x2_centered,

x3,

x3 - avg(x3) over () as x3_centered

from fwl_generated_data.csv

),

------------------------------------------------------------

regress_1 as (

select

avg(x1) - avg(x2) * sum(x1_centered * x2_centered) / sum(x2_centered * x2_centered) as x1x2_const,

sum(x1_centered * x2_centered) / sum(x2_centered * x2_centered) as x1x2_coef,

avg(x1) - avg(x3) * sum(x1_centered * x3_centered) / sum(x3_centered * x3_centered) as x1x3_const,

sum(x1_centered * x3_centered) / sum(x3_centered * x3_centered) as x1x3_coef,

avg(x2) - avg(x1) * sum(x2_centered * x1_centered) / sum(x1_centered * x1_centered) as x2x1_const,

sum(x2_centered * x1_centered) / sum(x1_centered * x1_centered) as x2x1_coef,

avg(x2) - avg(x3) * sum(x2_centered * x3_centered) / sum(x3_centered * x3_centered) as x2x3_const,

sum(x2_centered * x3_centered) / sum(x3_centered * x3_centered) as x2x3_coef,

avg(x3) - avg(x1) * sum(x3_centered * x1_centered) / sum(x1_centered * x1_centered) as x3x1_const,

sum(x3_centered * x1_centered) / sum(x1_centered * x1_centered) as x3x1_coef,

avg(x3) - avg(x2) * sum(x3_centered * x2_centered) / sum(x2_centered * x2_centered) as x3x2_const,

sum(x3_centered * x2_centered) / sum(x2_centered * x2_centered) as x3x2_coef

from base

),

resids_1 as (

select

y,

y_centered,

x1,

x1_centered,

x2,

x2_centered,

x3,

x3_centered,

x1 - (select x1x2_coef from regress_1) * x2 - (select x1x2_const from regress_1) as x1x2_resid,

x1 - (select x1x3_coef from regress_1) * x3 - (select x1x3_const from regress_1) as x1x3_resid,

x2 - (select x2x1_coef from regress_1) * x1 - (select x2x1_const from regress_1) as x2x1_resid,

x2 - (select x2x3_coef from regress_1) * x3 - (select x2x3_const from regress_1) as x2x3_resid,

x3 - (select x3x1_coef from regress_1) * x1 - (select x3x1_const from regress_1) as x3x1_resid,

x3 - (select x3x2_coef from regress_1) * x2 - (select x3x2_const from regress_1) as x3x2_resid,

from base

),

regress_2 as (

select

sum(x1_centered * x2x3_resid) / sum(x2x3_resid * x2x3_resid) as x1_x2x3_coef,

sum(x1_centered * x3x2_resid) / sum(x3x2_resid * x3x2_resid) as x1_x3x2_coef,

avg(x1)

- avg(x2) * sum(x1_centered * x2x3_resid) / sum(x2x3_resid * x2x3_resid)

- avg(x3) * sum(x1_centered * x3x2_resid) / sum(x3x2_resid * x3x2_resid)

as x1_const,

sum(x2_centered * x1x3_resid) / sum(x1x3_resid * x1x3_resid) as x2_x1x3_coef,

sum(x2_centered * x3x1_resid) / sum(x3x1_resid * x3x1_resid) as x2_x3x1_coef,

avg(x2)

- avg(x1) * sum(x2_centered * x1x3_resid) / sum(x1x3_resid * x1x3_resid)

- avg(x3) * sum(x2_centered * x3x1_resid) / sum(x3x1_resid * x3x1_resid)

as x2_const,

sum(x3_centered * x2x1_resid) / sum(x2x1_resid * x2x1_resid) as x3_x2x1_coef,

sum(x3_centered * x1x2_resid) / sum(x1x2_resid * x1x2_resid) as x3_x1x2_coef,

avg(x3)

- avg(x1) * sum(x3_centered * x1x2_resid) / sum(x1x2_resid * x1x2_resid)

- avg(x2) * sum(x3_centered * x2x1_resid) / sum(x2x1_resid * x2x1_resid)

as x3_const

from resids_1

),

resids_2 as (

select

y,

y_centered,

x1,

x1_centered,

x2,

x2_centered,

x3,

x3_centered,

x1

- (select x1_x2x3_coef from regress_2) * x2

- (select x1_x3x2_coef from regress_2) * x3

- (select x1_const from regress_2)

as x1_resid,

x2

- (select x2_x1x3_coef from regress_2) * x1

- (select x2_x3x1_coef from regress_2) * x3

- (select x2_const from regress_2)

as x2_resid,

x3

- (select x3_x1x2_coef from regress_2) * x1

- (select x3_x2x1_coef from regress_2) * x2

- (select x3_const from regress_2)

as x3_resid,

from base

),

regress_3 as (

select

sum(y_centered * x1_resid) / sum(x1_resid * x1_resid) as x1_coef,

sum(y_centered * x2_resid) / sum(x2_resid * x2_resid) as x2_coef,

sum(y_centered * x3_resid) / sum(x3_resid * x3_resid) as x3_coef,

avg(y)

- avg(x1) * sum(y_centered * x1_resid) / sum(x1_resid * x1_resid)

- avg(x2) * sum(y_centered * x2_resid) / sum(x2_resid * x2_resid)

- avg(x3) * sum(y_centered * x3_resid) / sum(x3_resid * x3_resid)

as const_coef

from resids_2

)

select * from regress_3

"""

con.execute(big_multiple_regression_query).df()

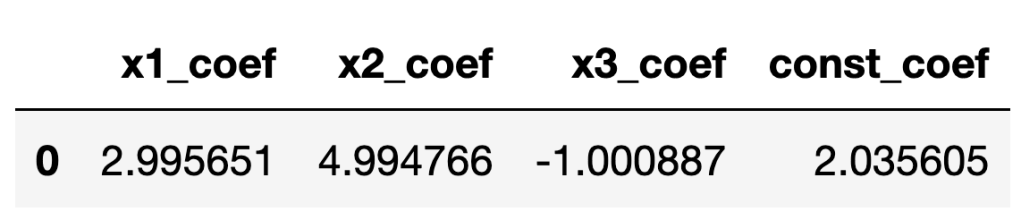

Here’s the output of the SQL:

And there you have it! Multiple regression with 3 predictors, done in SQL, using nothing more complex than AVG() and SUM().

You must be logged in to post a comment.