This is the 1st article in a 2-part series on the use of AI in hiring. The 2nd part will be available on Wednesday, January 8th.

Arvind Narayanan somewhat recently put out a presentation called “How to recognize AI snake oil.” It’s incredible, and I highly recommend reading it in full. He also has a Twitter thread about it here.

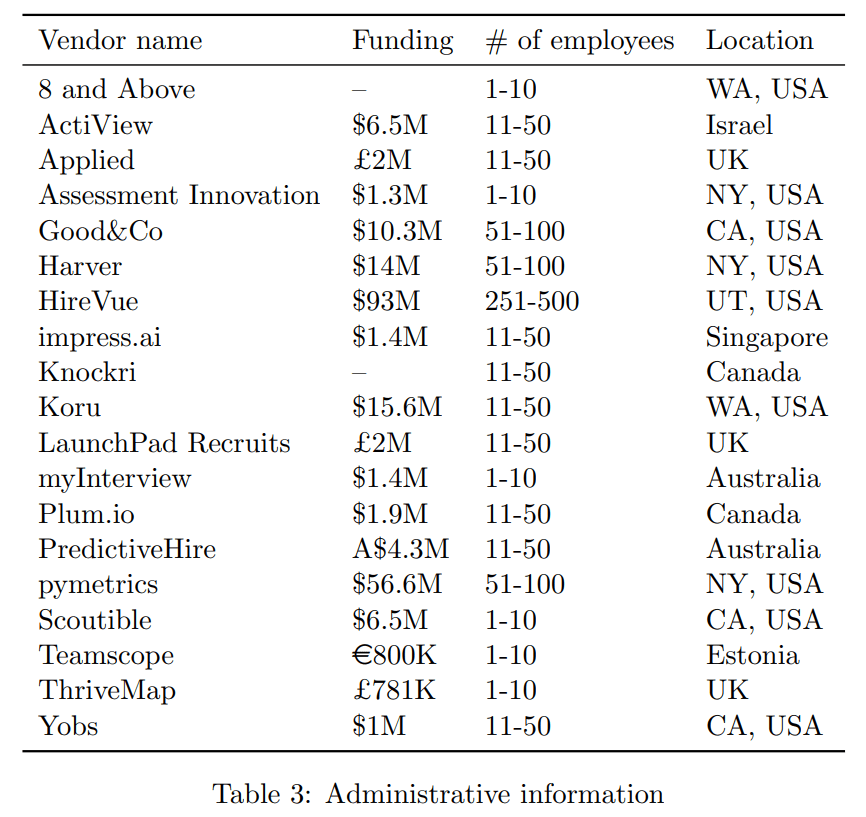

One example of AI snake oil Dr. Narayanan seems to find especially egregious is the use of AI to assess the quality of prospective job candidates. He points to this table from Raghavan et al. 2019 of a plethora of companies trying to develop AI tools to assess the quality of job candidates:

Dr. Narayanan’s criticism of these snake oil peddlers is especially cogent and articulate, but it’s not atypical; many AI researchers and statisticians had already been ringing the alarm bells about how assessing job candidates using AI can lead to perverse outcomes.

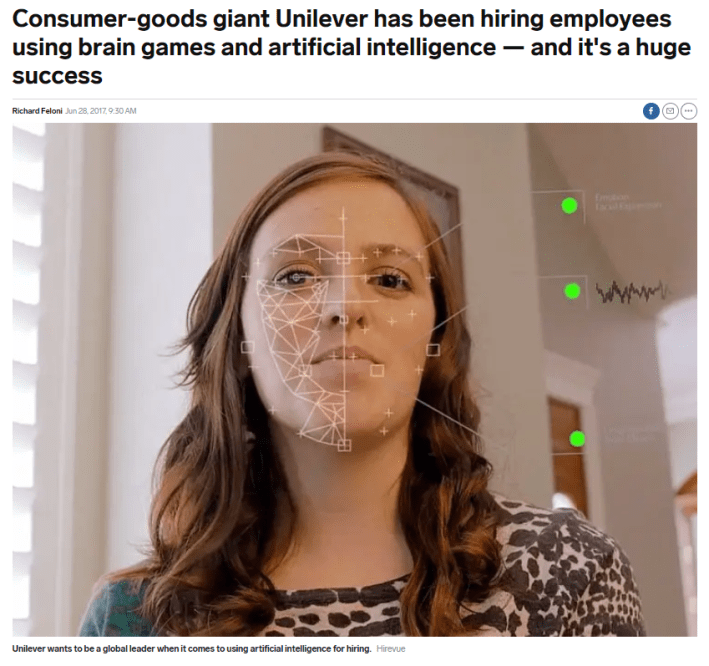

There are numerous challenges to using AI to help score candidates’ CVs and resumes; some of these challenges are covered in Dr. Narayanan’s talk. Many of the aforementioned companies also take the approach of digitally scanning prospective workers during a video interview. This approach is not a mere “challenge” or “shortcoming,” though– it is an absurd, irredeemable pseudoscience that Dr. Narayanan describes as “an elaborate random number generator.” If you think such video technology has striking similarities to the old 19th-century racist pseudoscience of phrenology, you’re not alone.

AI Does Not Fix Hiring Discrimination

I agree with Dr. Narayanan’s assessment that using AI to predict social outcomes is “fundamentally dubious,” but I don’t believe that AI is doomed to always be worse than humans at assessing job candidates for quality. This is not because we have reason to believe AI will ever be particularly good at making hiring decisions, but because, in my view, humans are pretty bad at making these hiring assessments to begin with. So long as AI can perform slightly better than a checklist plus a coin flip, it seems like AI would be at least on par with humans.

I also don’t believe that AI is doomed to forever discriminate in hiring. I think it’s hypothetically possible to design an AI that doesn’t have a single racist or sexist kilobyte in it.

What’s less clear is why anyone should believe that the average AI system should be better at reducing hiring discrimination than the average traditional hiring practice. The idea AI proponents seem to have is that AI is more “objective,” and because there is less discretion involved, it is therefore less discriminatory. This is silly logic: if an employer made a rule that said, “hire the 5th white person that walks through the door,” this rule is trivial to objectively enforce. But obviously it is the rule itself that is discriminatory, not any discretionary wiggle room you can squeeze out of the rule. If your response to this is “actually, the choice of which rule to use was subjective, so subjectivity is still the problem,” then yes, that’s the point! Nothing in the process of building, training, and implementing an AI is ordained by God. The use of AI is up to an employer’s discretion, and the AI may just end up enforcing racist rules objectively.

None of this is hypothetical. Amazon spent what I can only assume is millions of dollars on a now-defunct resume-assessing AI that discriminated against women as measured by the disparate impact it had on the scores of female applicants. The reasons why it didn’t work out are worth examining: after all, if the fancy, Big Tech data scientists at Amazon can’t get AI to work the way they want it to, then what hope do the rest of us have?

The last time I pointed to this article, some detractors seemed to suggest that if Amazon’s AI is discriminating against women, then it might mean that the women are inferior job candidates for these roles. This constitutes a serious misunderstanding of how AI is actually calibrated: after all, AI has no predefined conception of what a “good” or “bad” job candidate is. These AI don’t even know what “job candidates” are the way that human beings do: these AI programs are simply trained to identify correlations and patterns in the scores given by actual human beings to a bunch of resume documents. If the patterns used to train the AI are biased against women, then the AI will spit out results that are biased against women. This principle is usually summarized as “garbage in, garbage out,” or “GIGO” for short.

The GIGO adage doesn’t suddenly go away just because you’re using stochastic gradient descent and deep learning, but many Silicon Valley hype men get paid lavishly to argue otherwise. Loren Larsen, HireVue’s CTO, argues that human decision-makers are the “ultimate black box,” which is an allusion to the criticism commonly levied at AI algorithms as being unaccountable black boxes. This is the best response Larsen has to the criticism that AI for hiring decisions is a “pseudoscience,” and it doesn’t even make much sense: you can simply ask a human how they made a decision– and if you ask nicely, they will probably tell you. You can’t ask an AI how it made a decision. Furthermore, it’s almost impossible to reverse engineer an AI that utilizes the sorts of digital video data that HireVue says it uses to help you make automated hiring decisions. You can test how an AI scores resumes by changing a word here or there to “control” for the rest of the resume content, but how exactly do you do that for videos? Digitally alter how much a job candidate blinks, or alter the specific ways in which they move their mouth?

The rest of Larsen’s counterarguments range from completely irrelevant (“When 1,000 people apply for one job, 999 people are going to get rejected, whether a company uses AI or not.”) to flat-out untrue (“People are rejected all the time based on how they look, their shoes, how they tucked in their shirts and how ‘hot’ they are. Algorithms eliminate most of that in a way that hasn’t been possible before.”).

The Public Discourse

Convincing the public of any of this is an uphill battle. People seem to be split on whether discrimination is much of a problem to begin with, and whether AI can help fix any discrimination that exists is another question entirely. It does not help that experts are being drowned out by corporations and moneyed interests who are trying to sell to the public the idea that their products work.

The last time a major politician suggested that algorithms can be biased, conservative outlets made fun of the notion:

Other than the brief news cycle devoted to the AOC quote and the occasional comments section of articles that periodically broach the topic, we don’t have much information on what the public actually thinks about this.

You can find discussions of AI discrimination in publications aimed at the technologically savvy and the occasional mainstream publication, such as in TIME Magazine or the New York Times. However, it’s not clear that this outreach works. The TIME article on facial recognition technology points out that the facial recognition AI is more likely to misgender black faces than white faces, and explains why in exactly one sentence: “These systems are often trained on images of predominantly light-skinned men.” The article does not really belabor the point that the humans designing it are at fault. I’m left wondering how many casual readers may think, “well this all sounds unfortunate, but maybe black faces are harder to get right,” and not grasp that there is a real problem here that the humans designing these systems already have the capabilities to fix (i.e. add more training data for black faces).

The New York Times article by Jamie Condliffe linked above notes that if the data the AI is trained on is biased, then the algorithm will be biased. This is actually two conceptual leaps that may be news to a lot of people: first, the notion that AI is “trained” on data as opposed to being engineered deliberately; second, the notion that data may contain bias.

An earlier NYT op-ed by Dr. Ifeoma Ajunwa goes into slightly more detail, describing the issue with AI as a negative feedback loop and opting not to discuss particularities like training data. She never mentions where the racial discrimination comes in; a casual reader may just think that what she describes is just the AI getting more refined to pick good candidates after receiving feedback that the previous candidates were good.

I don’t mean to come off too scornful of the people who are writing these things, all of whom are 100% correct and are doing a great public service by writing about these challenges. I’m just not sure how many people are being convinced by this. This is not the fault of the writers: explaining how AI works to lay audiences is hard, and convincing people that some tech companies are making bogus tech might be hard considering that Americans love and trust the major tech giants Amazon and Google more than every governmental institution besides the military.

What Does the Public Think About AI in Hiring?

To understand what the public believes about all this, I collected responses from 89 U.S. residents through Amazon Mechanical Turk. Participants were provided a survey related to general sentiments toward the labor market and hiring discrimination. (133 participants were surveyed in total, but 44 responses were thrown out due to inconsistent answers to reverse-ordered questions. All survey takers were compensated $1.25 for their time, regardless of whether their answers were unusable.)

In part, the labor market questions were to control for attitudes toward the labor market, under my suspicion that MTurk users (who are atypical and often poorly compensated participants in the broader labor market) may be unusually dismal about the labor market and more suspicious of employers. Based on responses to these questions, it did not appear that MTurk users were pessimistic about employers or the broader labor market.

Near the end of the survey, participants were presented with the following prompt:

Some employers are experimenting with using artificial intelligence to assist in making hiring decisions.

Some AI researchers are hopeful that the use of AI in hiring will reduce racial disparities in the job market because AI make more objective decisions.

Other AI researchers are worried that the use of AI in hiring may increase racial disparities in the job market because AI is trained using biased data.

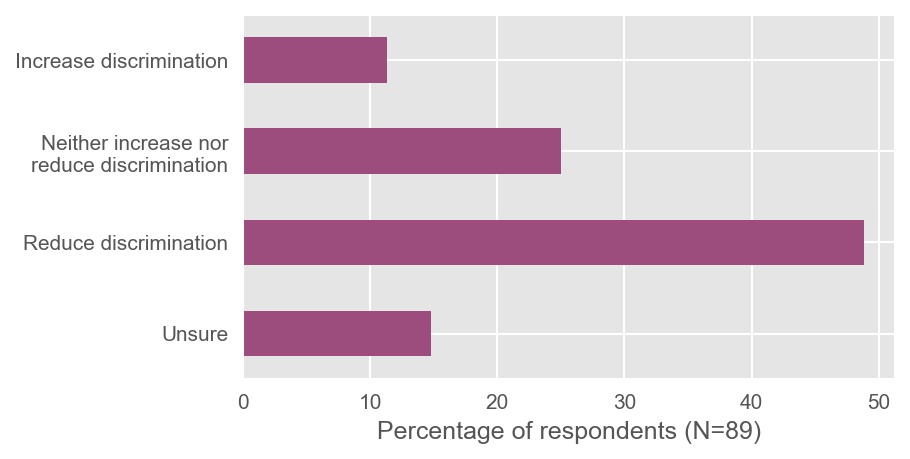

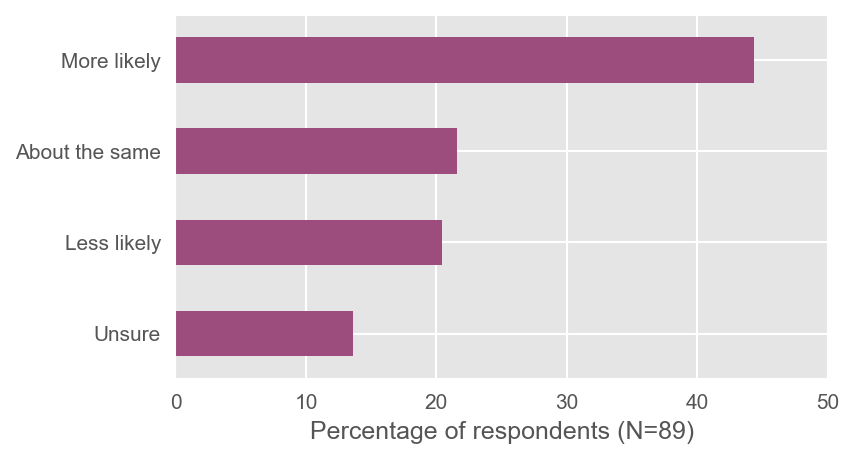

Participants were then asked the following two questions, with the distribution of responses provided below the questions:

“How do you think the use of artificial intelligence in hiring decisions will affect discrimination in the job market?”

“Do you think artificial intelligence is more or less likely to improve the quality of a workforce when used to make hiring decisions?”

When presented with both the argument for and against AI, participants were far more likely to respond optimistically about AI hiring technology; 49% of participants believe that AI in hiring would reduce discrimination, and 44% believe that using AI in hiring would improve the quality of the workforce. If these results are to be believed, then AI technology is not being hoisted upon the public despite protestations and concerns; rather, the public overwhelmingly believes the introduction of this technology is good.

I find these results concerning– not because it’s hard to imagine AI systems that improve workforce quality and reduce discrimination, but because it’s so easy to imagine AI that doesn’t do these things that is sold under false pretenses. Unfortunately, I also don’t know of an easy way to encourage more skepticism among the public. My suspicion is that the public will only become skeptical of this technology as part of a broader anti-tech zeitgeist, such as a revolt against the ills of social media (e.g. Matt Yglesias has a great piece on how Facebook makes us miserable). Until then, I suspect the public will be easily sold on Silicon Valley snake oil.

When I started this project, it was not obvious to me that survey respondents would be optimistic about the prospects of using AI to build out a workforce. Speaking from personal experience, I know first-hand that getting rejected constantly hurts. After dozens of rejections in a row, after I felt like I’ve exhausted most of the job listings in my area, I started to wonder if there were any issues with my resume that were totally of my control, such as the school I went to or the previous place I worked. I was hopeful that if I applied to enough places that someone would read my resume, realize that I know what I’m doing, and give me a chance, even if I don’t fit the mold entirely. And eventually, that happened. Would an AI have given me the chance to prove myself that the humans who read my resume and eventually hired me did? I have no idea. What I will say is that humans do seem a bit more persuadable than algorithms. Maybe our amenability will be our demise during the robotic uprising against the stoic uncompromising robots, but until then, I see it as one of our better attributes.

This is the 1st article in a 2-part series on the use of AI in hiring. The 2nd part will be available on Wednesday, January 8th.

Major kudos to Matt Darling (@besttrousers) and Daniel Ruffolo (@SFF_Writer_Dan) for their constructive feedback on this series. The views expressed in this article are mine alone and do not necessarily represent the views of anyone who assisted me.

You must be logged in to post a comment.